It is quite astonishing with the rate at which technology is advancing in this 21st century. Remarkable achievements have been recorded, and almost every hour of the day is almost an innovation. So, we will look into the future of robotics and what it promises to unfold.

However, the speed at which this change is occurring does not appear to slow down anytime soon. But instead, it has every tendency to even move at a higher speed than it is as the day passes.

The Robotics field is a field that has greatly been impacted by the rate at which technology is advancing in this 21st century and this impact is changing the patterns in the robotics field and shaping the field to a different extent than it was before.

What Does Robotics Mean?

Robotics is a branch of engineering that involves the conception, design, manufacture, and operation of robots. The objective of the robotics field is to create intelligent machines that can assist humans in a variety of ways.

Robotics can take on several forms. A robot may resemble a human, or it may be in the form of a robotic application, such as robotic process automation (RPA), which simulates how humans engage with software to perform repetitive, rules-based tasks.

While the field of robotics and exploration of the potential uses and functionality of robots has grown substantially in the 20th century, the idea is certainly not a new one.

Types of Robots

Robots are versatile machines, evidenced by their wide variety of forms and functions. Here’s a list of a few kinds of robots we see today:

#1. Healthcare

Robots in the healthcare industry do everything from assisting in surgery to physical therapy to helping people walk to moving through hospitals and delivering essential supplies such as meds or linens.

Healthcare robots have even contributed to the ongoing fight against the pandemic, filling and sealing testing swabs, and producing respirators.

#2. Homelife

You need to look no further than a Roomba to find a robot in someone’s house. But they do more now than vacuuming floors; home-based robots can mow lawns or augment tools like Alexa.

Manufacturing: The field of manufacturing was the first to adopt robots, such as the automobile assembly line machines we previously mentioned. Industrial robots handle various tasks like arc welding, material handling, steel cutting, and food packaging.

#3. Logistics

Everybody wants their online orders delivered on time, if not sooner. So companies employ robots to stack warehouse shelves, retrieve goods, and even conduct short-range deliveries.

Space Exploration: Mars explorers such as Sojourner and Perseverance are robots. The Hubble telescope is classified as a robot, as are deep space probes like Voyager and Cassini.

#4. Military

Robots handle dangerous tasks, and it doesn’t get any more difficult than modern warfare.

Consequently, the military enjoys a diverse selection of robots equipped to address many of the riskier jobs associated with war.

For example, there’s the Centaur, an explosive detection/disposal robot that looks for mines and IEDs, the MUTT, which follows soldiers around and totes their gear, and SAFFiR, which fights fires that break out on naval vessels.

#5. Entertainment

We already have toy robots, robot statues, and robot restaurants. As robots become more sophisticated, expect their entertainment value to rise accordingly.

#6. Travel

We only need to say three words: self-driving vehicles.

There are more fields to mention for the future of robotics. It can be virtually everywhere you need automation and accuracy

A Brief History of Robotics

The term robotics is an extension of the word robot. One of its first uses came from Czech writer Karel Čapek, who used the word in his play, Rossum’s Universal Robots(R.U.R), in 1920. The machines (R.U.R) got their name from the Czech word for slave.

The word “robotics” was also coined by a writer. Russian-born American science-fiction writer Isaac Asimov first used the word in 1942 in his short story “Runabout.”

Asimov had a much brighter and more optimistic opinion of the robot’s role in human society than did Capek.

He generally characterized the robots in his short stories as helpful servants of man and viewed robots as “a better, cleaner race.” Isaac Asimov was therefore given the credit as the first man to use the term by the Oxford English Dictionary.

Asimov also proposed three “Laws of Robotics” which have survived to this moment that his robots, as well as sci-fi robotic characters of many other stories, followed:

- A robot may not injure a human being or, through inaction, allow a human being to come to harm.

- A robot must obey the orders given to it by human beings except where such orders would conflict with the First Law.

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

Some major milestones in the History of Robotics

In 1949, an American-born British neurophysiologist and inventor named William Grey Walter introduced a pair of battery-powered, tortoise-shaped robots that could maneuver around objects in a room.

Also, guide themselves toward a source of light and find their way back to a charging station using the same components that remain crucial to robotics today: sensor technology, a responsive feedback loop, and logical reasoning.

Known as “Unimate,” the first industrial robotic arm went to work in a General Motors plant, lifting and stacking hot, die-cut metal parts.

Created by George Devol and his partner Joseph Engelberger, it could move up and down on the X and Y axis, possessed a rotatable, pincer-like gripper, and could follow a program of up to 200 movements stored in its memory.

Deployable for numerous tasks, most notably some that was too taxing or dangerous for humans like lifting 75-pound loads without tiring and working amid toxic fumes the Unimate began the transformation of the auto industry into an arena of widespread automation.

First “Pick and Place” Robot

While six-axis Unimate-style arms can lift heavy payloads and manipulate them with precision, not all industrial labor requires strength.

In 1978, the Japanese automation researcher Hiroshi Makino designed the four-axis SCARA, or the “Selective Compliance Assembly Robot Arm,” engineered simply to pick something up, swivel around, and plop it down somewhere else with precision — all in one smooth motion.

It is the first example of what has come to be known as “pick and place” robots.

SCARA arms are generally less flexible and not as strong as six-axis arms, but they are much faster and able to rapidly insert small electronic components into place.

The arms sped up the manufacturing of everything from computer chips to watches and are still commonly used in global manufacturing today.

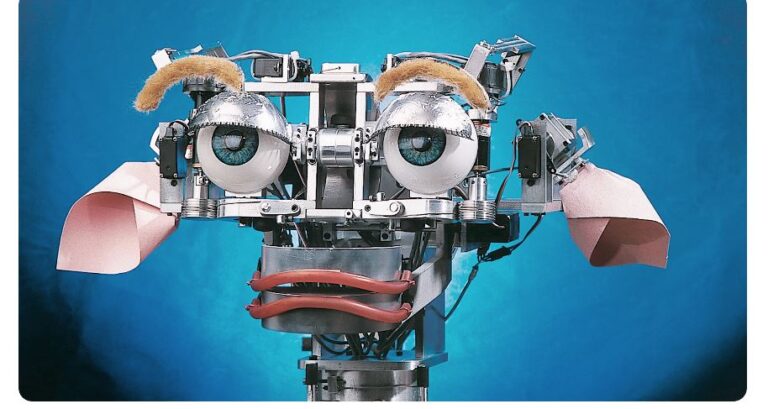

First “Sociable” Robot Designed to Provoke and React to Emotions

Cynthia Breazeal believes that if we are truly going to work alongside robots, trust them, and invite them into our homes, robots will need to be able to read people’s emotions and appear to have a personality.

With this in mind, she set to work creating Kismet, a robotic head designed to provoke — and react to — emotions. Twenty-one motors controlled an expressive pair of yellow eyebrows, red lips, pink ears, and big blue eyes, allowing Kismet to express a range of emotions, from happy to bored.

Audio sensors and algorithms picked up the vocal tone, so the robot would look downcast if you yelled at it, or curious if you spoke gently.

With Kismet, Breazeal proved the stickiness and appeal of a robot that has charm — laying the groundwork for the many voice assistants like Alexa, Siri, and Google Home that are now colonizing the world’s homes.

These tools are proving that a lot will happen in the future of robotics.

i-sobot | entertainment robot

In 2007, TOMY launched the entertainment robot, i-sobot, a humanoid bipedal robot that can walk like a human and perform kicks and punches and also some entertaining tricks and special actions under “Special Action Mode”.

The 2010s were defined by large-scale improvements in the availability, power, and versatility of commonly available robotic components, as well as the mass proliferation of robots into everyday life, which caused both optimistic speculation and new societal concerns.

Development of humanoid robots continued to advance; Robonaut 2 was launched to the International Space Station aboard Space Shuttle Discovery on the STS-133 mission in 2011 as the first humanoid robot in space.

While its initial purpose was to teach engineers how dextrous robots behave in space, the hope is that through upgrades and advancements, it could one day venture outside the station to help spacewalkers make repairs or additions to the station or perform scientific work.

By the end of the decade, humanoid and animal-like robots were capable of clearing difficult obstacle courses, maintaining balance, and even performing gymnastic feats.

However, the vast majority of robotic developments in the 2010s instead saw smaller, more specialized non-humanoid robots become cheaper, more capable, and more universal.

The 2010s also saw the growth of new software paradigms, which allowed robots and their AI systems to take advantage of this increased computing power.

Neural networks became increasingly well developed in the 2010s, with companies like Google offering free and open access to products like TensorFlow, which allowed robot manufacturers to quickly integrate neural nets that allowed for abilities like facial recognition and object identification in even the smallest, cheapest robots.

The Robotics Future

Robots will increase economic growth and productivity and create new career opportunities for many people worldwide. However, there are still warnings out there about massive job losses, forecasting losses of 20 million manufacturing jobs by 2030, or how 30% of all jobs could be automated by 2030.

Certainly, increasingly sophisticated machines may populate our world, but for robots to be really useful, they’ll have to become more self-sufficient.

After all, it would be impossible to program a home robot with the instructions for gripping each and every object it ever might encounter. You want it to learn on its own, and that is where advances in artificial intelligence come in.

Today, advanced robots are popping up everywhere. For that, you can thank three technologies in particular: sensors, actuators, and AI(Artificial Intelligence).

Thanks to improved sensor technology and more remarkable advances in Machine Learning and Artificial Intelligence, robots will keep moving from mere rote machines to collaborators with cognitive functions.

These advances, and other associated fields, are enjoying an upward trajectory, and robotics will significantly benefit from these strides.

We can expect to see more significant numbers of increasingly sophisticated robots incorporated into more areas of life, working with humans.

The Future of Robotics: What’s the Use of AI in Robotics?

Artificial Intelligence (AI) increases human-robot interaction, collaboration opportunities, and quality. The industrial sector already has co-bots, which are robots that work alongside humans to perform testing and assembly.

Advances in AI help robots mimic human behavior more closely, which is why they were created in the first place. Robots that act and think more like people can integrate better into the workforce and bring a level of efficiency unmatched by human employees.

Robot designers use Artificial Intelligence to give their creations enhanced capabilities like:

Computer Vision

Robots can identify and recognize objects they meet, discern details, and learn how to navigate or avoid specific items.

Manipulation

AI helps robots gain the fine motor skills needed to grasp objects without destroying the item.

Motion Control and Navigation

Robots no longer need humans to guide them along paths and process flows. AI enables robots to analyze their environment and self-navigate. This capability even applies to the virtual world of software. AI helps robot software processes avoid flow bottlenecks or process exceptions.

Natural Language Processing (NLP) and Real-World Perception

Artificial Intelligence and Machine Learning (ML) help robots better understand their surroundings, recognize and identify patterns, and comprehend data. These improvements increase the robot’s autonomy and decrease reliance on human agents.

This approach is key to the great future of robotics.

Transfer learning and AI

Transfer learning is a technique that reuses knowledge gained from solving one problem and reapplies it in solving a related problem.

For example, the model used for identifying an apple may be used to identify an Orange.

Transfer learning re-uses a pre-trained model on another (related) problem for image recognition.

Only the final layers of the new model are trained which is relatively cheaper and less time-consuming.

Transfer Learning applies to Mobile devices for inference at the edge i.e. the model is trained in the Cloud and deployed on the Edge. This idea is best seen in Tensorflow Lite / Tensorflow Mobile (Note – the following is a large pdf file – Mobile Object Detection using TensorFlow Lite and Transfer Learning.

The same principle applies to Robotics i.e. the model could be trained in the Cloud and deployed on the Device.

Transfer Learning is useful in many cases where you may not have access to the Cloud (ex where the Robot is offline). In addition, transfer learning can be used by Robots to train other Robots.

Conclusion

The future of robotics is just an interesting one to embrace by humans. What robots are created for is just to make life easy for humans. However, humans are afraid of the rate at which robotics and AI are collaborating to do what humans are capable of doing.

In addition, some companies are laying off staff in the place of robots just to cut the cost of labor and improve accuracy.

The advantage of the future of robotics is one that will aid productivity in humans and not take over the role of humans entirely.

Frequently Asked Questions

Virtually all the fields you can think of, but these are the notable fields; healthcare, military, logistics, entertainment, education, homelife, manufacturing industries, etc.

By the year 2030, robotics might be more sophisticated and powerful than our own biological functions, and they might even be programmable. Initial examinations, tests, X-rays, MRIs, main diagnoses, and even treatment will all be performed by AI.

A robotics engineer is a behind-the-scenes designer responsible for creating robots and robotic systems that are able to perform duties that humans are either unable or prefer not to complete. For example, the Roomba was created to help humans with the mundane task of vacuuming floors.

Coding for kids refers to any action or activity that aids children in understanding robotics engineering. Robotics-related games and kits can be used as activities to get kids’ little hands busy constructing something entertaining, while more in-depth courses can help kids learn more about the design and uses of robots.

Robotics engineers majorly spent some time developing the strategies and procedures required to not only create effective robots but also to construct them. Some robotics engineers also create the tools used to build the robots themselves.

References

- https://www.simplilearn.com/future-of-robotics-article

- https://www.careerexplorer.com/careers/robotics-engineer/

- https://wiingy.com/blog/robotics-for-kids/

- https://en.wikipedia.org/wiki/Robotics#History

Heard some buzz about babu888live. Planning to give it a whirl this weekend. Hopefully, it lives up to the hype! Check it out babu888live

Downloaded the kjbetapp the other day. Pretty smooth so far. UI is clean and easy to use. Give it a shot kjbetapp

Time2betlogin makes getting in the game so easy. No more messing with forgotten passwords. Solid option, really time2betlogin

Your point of view caught my eye and was very interesting. Thanks. I have a question for you. https://accounts.binance.info/en-IN/register-person?ref=A80YTPZ1

prednisone pills 10 mg: prednisone tablets 10mg 20mg – buy prednisone mexico

Thank you for your sharing. I am worried that I lack creative ideas. It is your article that makes me full of hope. Thank you. But, I have a question, can you help me? https://accounts.binance.info/register-person?ref=QCGZMHR6

Your point of view caught my eye and was very interesting. Thanks. I have a question for you.

prednisone in canada: prednisone without prescription usa – order prednisone 10mg

http://pawtrustmeds.com/# pet drugs online

canadian pharmacy prices: canadian family pharmacy – canadian pharmacy service

http://northaccessrx.com/# best canadian online pharmacy

Paw Trust Meds: pet meds for dogs – online vet pharmacy

https://northaccessrx.com/# canadian drugs pharmacy

Global India Pharmacy: Global India Pharmacy – top 10 online pharmacy in india

Global India Pharmacy: buy prescription drugs from india – buy prescription drugs from india

canadian pharmacy store: best canadian pharmacy online – canadian 24 hour pharmacy

https://northaccessrx.shop/# onlinepharmaciescanada com

online vet pharmacy: pet meds official website – Paw Trust Meds

Global India Pharmacy: Global India Pharmacy – Global India Pharmacy

http://globalindiapharmacy.com/# Global India Pharmacy

canadian pharmacy service: NorthAccess Rx – canadian pharmacy price checker

https://globalindiapharmacy.com/# world pharmacy india

buy prescription drugs from india: reputable indian online pharmacy – Global India Pharmacy

canadian pharmacy meds: NorthAccess Rx – canada cloud pharmacy

pet med: Paw Trust Meds – pet drugs online

https://pawtrustmeds.com/# online pet pharmacy

pet drugs online: Paw Trust Meds – Paw Trust Meds

canada pharmacy: canadian pharmacy meds – canadianpharmacyworld

http://pawtrustmeds.com/# vet pharmacy online

https://globalindiapharmacy.shop/# Global India Pharmacy

reputable canadian pharmacy: NorthAccess Rx – canadian pharmacies compare

Paw Trust Meds: Paw Trust Meds – pet med

Global India Pharmacy: mail order pharmacy india – Global India Pharmacy

Paw Trust Meds: pet meds online – Paw Trust Meds

Paw Trust Meds: pet drugs online – Paw Trust Meds

pet med: pet drugs online – pet pharmacy

pet drugs online: pet pharmacy online – pet meds online

Global India Pharmacy: best india pharmacy – Global India Pharmacy

Global India Pharmacy: top 10 pharmacies in india – Global India Pharmacy

https://northaccessrx.com/# canadianpharmacyworld

safe canadian pharmacies: NorthAccess Rx – canadian mail order pharmacy

onlinecanadianpharmacy 24: canada pharmacy 24h – canadian pharmacy reviews

vet pharmacy: Paw Trust Meds – canada pet meds

Paw Trust Meds: vet pharmacy online – pet prescriptions online

canadian pharmacy uk delivery: NorthAccess Rx – canadian pharmacy online

buying from canadian pharmacies: canadian discount pharmacy – best rated canadian pharmacy

online pet pharmacy: pet prescriptions online – Paw Trust Meds

canadian king pharmacy: canadian online pharmacy – ed drugs online from canada

vet pharmacy online: pet drugs online – pet drugs online

Global India Pharmacy: buy prescription drugs from india – Global India Pharmacy

top mail order pharmacies: canada drugs online reviews – save on pharmacy

https://veritascarepharm.com/# Cialis 20mg price

https://veritascarepharm.com/# VeritasCare

CoreBlue Health: Cheap Sildenafil 100mg – Sildenafil Citrate Tablets 100mg

Cheap Cialis: buy cialis pill – Buy Tadalafil 20mg

https://veritascarepharm.com/# buy cialis pill

http://corebluehealth.com/# CoreBlue Health

Generic Viagra for sale: Order Viagra 50 mg online – Cheap Viagra 100mg

https://civicmeds.shop/# canadian discount pharmacy

cheapest online pharmacy india: canadian drug stores – pharmacy shop

cheap canadian pharmacy online: CivicMeds – legit canadian online pharmacy

https://civicmeds.com/# canadian world pharmacy

my canadian pharmacy review: canadian pharmacy discount code – no prescription pharmacy paypal

buy Viagra over the counter: Cheap Sildenafil 100mg – sildenafil over the counter

top mail order pharmacies: buying from canadian pharmacies – canada pharmacy 24h

https://civicmeds.com/# online pharmacy delivery delhi

Viagra online price: Buy Viagra online cheap – CoreBlue Health

best canadian pharmacy online: CivicMeds – international online pharmacy

Buy Cialis online: VeritasCare – VeritasCare

canada rx pharmacy: canadian pharmacy cialis 40 mg – which online pharmacy is reliable

cheapest prescription pharmacy: CivicMeds – online pharmacy ed

Generic Tadalafil 20mg price: Generic Cialis price – VeritasCare

VeritasCare: VeritasCare – Buy Tadalafil 10mg

24 hr pharmacy near me: CivicMeds – is canadian pharmacy legit

Sildenafil Citrate Tablets 100mg: sildenafil over the counter – buy Viagra online

Cialis 20mg price: VeritasCare – VeritasCare

VeritasCare: VeritasCare – VeritasCare

canadapharmacyonline: certified canadian international pharmacy – online pharmacy

best pharmacy: canadian pharmacy online store – canadian neighbor pharmacy

CoreBlue Health: buy Viagra online – buy Viagra online

pharmacy orlando: CivicMeds – 24 hours pharmacy

CoreBlue Health: CoreBlue Health – CoreBlue Health

Tadalafil Tablet: Tadalafil Tablet – VeritasCare

https://pinupazz.top/ pin-up online casino

pin up pin-up oyunu

pin up pin-up oyunu

pin up pin up

https://pinupazz.top/ pin-up oyunu

AccessBridge: mexican pharmacy online – AccessBridge

AccessBridge: AccessBridge Pharmacy – AccessBridge

SteadyMeds pharmacy: canadian drugs – canada drugs reviews

AccessBridge Pharmacy: AccessBridge – pharmacia mexico

AccessBridge Pharmacy: AccessBridge Pharmacy – mexico pharmacy list

AccessBridge: AccessBridge – AccessBridge

safe online pharmacies: FormuLine Pharmacy – best online pharmacy no prescription

AccessBridge: buying prescription drugs in mexico – pharmacy in mexico city

SteadyMeds: SteadyMeds pharmacy – SteadyMeds pharmacy

SteadyMeds pharmacy: SteadyMeds – SteadyMeds

buy online medicine: FormuLine Pharmacy – trusted online pharmacy

mexican pharmacy that ships to the us: the purple pharmacy mexico – AccessBridge

SteadyMeds: canadian pharmacy no rx needed – SteadyMeds

online pharmacy mexico: AccessBridge – AccessBridge Pharmacy

no prescription needed pharmacy: FormuLine Pharmacy – secure medical online pharmacy

legit online pharmacy: FormuLine Pharmacy – online pharmacy no rx

SteadyMeds pharmacy: canadian pharmacies that deliver to the us – SteadyMeds pharmacy

legal online pharmacy: FormuLine Pharmacy – safe online pharmacies

best rx pharmacy online: indian pharmacy paypal – reliable online pharmacy

AccessBridge Pharmacy: pharmacy mexico – AccessBridge Pharmacy

overseas online pharmacy: pharmacy website india – reliable online pharmacy

SteadyMeds pharmacy: pharmacy canadian – SteadyMeds pharmacy

best online pharmacy: FormuLine Pharmacy – no prescription needed pharmacy

AccessBridge: п»їmexican pharmacy – online pharmacy in mexico

farmacia pharmacy mexico: AccessBridge – mexican pharmacys

SteadyMeds: SteadyMeds pharmacy – canadian valley pharmacy

foreign online pharmacy: FormuLine Pharmacy – us pharmacy no prescription

order meds from mexico: online pharmacy in mexico – AccessBridge Pharmacy

pet meds for dogs: pet med – Pet Canada Direct

vet pharmacy: Pet Canada Direct – pet meds for dogs

Pet Canada Direct: Pet Canada Direct – Pet Canada Direct

Pharm Rate: new pharmacy online – us pharmacy no prescription

online pet pharmacy: Pet Canada Direct – Pet Canada Direct

cheap ed pills online: get ed prescription online – online pharmacy without scripts

edmeds: ed pills – pharmacy online

no prescription pharmacy paypal: online drugs order – Pharm Rate

over the counter antibiotics: generic antibiotics online cheap – antibiotics cheap

ivermectin oral: stromectol 3 mg dosage – stromectol reviews

ivermectin 1 cream generic: stromectol reviews – stromectol reviews

how to get rybelsus cheap: semaglutide life – top-rated online pharmacies

rybelsus novo nordisk: diabetes drug rybelsus – online pharmacy

ivermectin cream uk: stromectol 12mg – buy stromectol

antibiotics cheap: over the counter antibiotics – antibiotic without presription

stromectol reviews: stromectol reviews – ivermectin 500mg

antibiotics cheap: Over the counter antibiotics for infection – over the counter antibiotics

ivermectin 12: stromectol reviews – cost of ivermectin medicine

rybelsus for weight loss without diabetes: semaglutide life – online drugs order

over the counter antibiotics: over the counter antibiotics – over the counter antibiotics

antibiotics cheap: get antibiotics without seeing a doctor – antibiotics cheap

best semaglutide online program: semaglutide life – legit online pharmacy

can rybelsus 14 mg be cut in half: semaglutide life – us pharmacy no prescription

over the counter antibiotics: prescription antibiotic – over the counter antibiotics

buy antibiotics for sinus: over the counter antibiotics – over the counter antibiotics

antibiotics for uti: over the counter antibiotics – over the counter antibiotics

is ozempic semaglutide: semaglutide life – reputable overseas online pharmacies

prescribed antibiotics online: over the counter antibiotics – over the counter antibiotics

stromectol cvs: stromectol reviews – buy stromectol canada

semaglutide alcohol: wegovy vs rybelsus – us pharmacy no prescription

rybelsus and diarrhea: semaglutide dose – online pharmacy without prescription

canadian online pharmacy reviews: Canadian Tabs – Canadian Tabs

Mexican Pharm: Mexican Pharm – pharmacy in mexico city

india pharmacy mail order: Indian Meds Delivery – overseas online pharmacy

order medication from mexico: mexican mail order pharmacy – mexicanrxpharm

mexican pharmacy that ships to the us: Mexican Pharm – purple pharmacy online ordering

canadian drugstore online: Canadian Tabs – canada drugs

Vet Pharm First: vet pharmacy online – online vet pharmacy

online pharmacies: online pharmacy without prescription – legal online pharmacies in the us

Vet Pharm First: Vet Pharm First – dog medicine

Ivermectin First: ivermectin cost uk – ivermectin 3mg tablets

Thank you for your sharing. I am worried that I lack creative ideas. It is your article that makes me full of hope. Thank you. But, I have a question, can you help me? https://www.binance.bh/register?ref=JW3W4Y3A

stromectol online: Ivermectin First – Ivermectin First

ivermectin pills canada: Ivermectin First – generic ivermectin cream

п»їinternational drug mart: Online Pharm First – online pharmacy without scripts

ivermectin price usa: stromectol uk buy – stromectol tablet 3 mg

Cheap generic Viagra Viagra generic over the counter viagra canada

Cheap generic Viagra sildenafil online cheapest viagra

can rybelsus 14 mg be cut in half how to reconstitute 5mg semaglutide best rx pharmacy online

generic sildenafil Viagra online price Buy generic 100mg Viagra online

Cheap Viagra 100mg Viagra online price Viagra Tablet price

best time to take semaglutide injection metformin and semaglutide overseas online pharmacy

https://viagra.onl/# order viagra

Generic Tadalafil 20mg price buy cialis pill п»їcialis generic

https://rybelsus.pro/# nausea from semaglutide

Tadalafil Tablet Tadalafil Tablet Tadalafil Tablet

https://cialis.sbs/# Cialis over the counter

https://rybelsus.pro/# semaglutide injection dosage

Buy Tadalafil 10mg п»їcialis generic Buy Tadalafil 10mg

https://viagra.onl/# Cheap Sildenafil 100mg

п»їcialis generic [url=https://cialis.sbs/#]Buy Cialis online[/url] Generic Tadalafil 20mg price

https://cialis.sbs/# Buy Tadalafil 20mg

cheapest cialis Generic Cialis price Tadalafil Tablet

https://rybelsus.pro/# how do you take rybelsus

cialis for sale Generic Cialis without a doctor prescription Buy Tadalafil 5mg

https://rybelsus.pro/# why am i not losing weight on rybelsus

pusulabet güncel [url=https://bestbetgiris.online/#]pusulabet[/url]

pusulabet [url=https://bestbetgiris.online/#]pusulabet[/url]

pusulabet casino: pusulabet resmi

pusulabet güncel: pusulabet resmi

bahiscasino güncel giriş: bahiscasino giris

https://casivipgiris.site# bahiscasino güncel adres

https://casivipgiris.site# bahiscasino resmi

pusulabet güncel adres [url=https://bestbetgiris.online/#]pusulabet[/url]

bahiscasino güncel adres: bahiscasino giris

Easy Mex Meds [url=https://easymexmeds.com/#]Easy Mex Meds[/url] mexico pharmacy price list

Easy Mex Meds: farmacias mexicanas – reliable rx pharmacy

Easy Canada Meds: Easy Canada Meds – Easy Canada Meds

order meds from mexico [url=http://easymexmeds.com/#]order antibiotics from mexico[/url] order antibiotics from mexico

reputable indian pharmacies: Easy India Meds – online pharmacies

canadian pharmacy antibiotics: Easy Canada Meds – buy prescription drugs from canada cheap

п»їlegitimate online pharmacies india [url=https://easyindiameds.com/#]Easy India Meds[/url] medicine online

pharmacy in mexico [url=http://easymexmeds.com/#]Easy Mex Meds[/url] Easy Mex Meds

Thanks for sharing. I read many of your blog posts, cool, your blog is very good. https://www.binance.com/futures/ref?code=IXBIAFVY

canadian pharmacy phone number: cheapest pharmacy canada – Easy Canada Meds

top 10 pharmacies in india: Easy India Meds – no script pharmacy

Easy Mex Meds: Easy Mex Meds – hydrocodone mexico pharmacy

canadian online drugstore [url=https://easycanadameds.shop/#]Easy Canada Meds[/url] Easy Canada Meds

Easy Canada Meds: п»їcanadian pharmacy uk delivery – canadian pharmacy meds reviews

mexican pharmacy ship to usa [url=http://easymexmeds.com/#]Easy Mex Meds[/url] Easy Mex Meds

indian pharmacy: best online pharmacy india – legitimate online pharmacy

canadian pharmacies: best canadian pharmacy – legal canadian pharmacy online

ed meds online canada: Easy Canada Meds – Easy Canada Meds

mexico prescription online: Easy Mex Meds – Easy Mex Meds

Easy Mex Meds: pharmacy mexico – Easy Mex Meds

online pharmacy india: reputable indian online pharmacy – overseas pharmacy no prescription

indianpharmacy com: Easy India Meds – medstore online pharmacy

indian pharmacies safe: indian pharmacy – legitimate online pharmacy

mexican pharmacies that ship to us: Easy Mex Meds – Easy Mex Meds

Easy Canada Meds: Easy Canada Meds – rate canadian pharmacies

indian pharmacies safe: online shopping pharmacy india – online pharmacy discount code

canadian pharmacy ratings: canadian drugstore online – Easy Canada Meds

Easy Canada Meds: canadian pharmacy price checker – Easy Canada Meds

pharmacy canadian: buying drugs from canada – pet meds without vet prescription canada

indian pharmacies safe: Easy India Meds – top-rated online pharmacies

best mexican pharmacy: phentermine in mexico pharmacy – Easy Mex Meds

Easy Canada Meds: Easy Canada Meds – Easy Canada Meds

https://freshpharm24.com/ best online pharmacy

https://freshpharm24.com/ pharmacy no prescription required

pharmacy fresh pharm best online pharmacy

Fresh Pharm 24 best online pharmacy no prescription

norvasc us pharmacy no prescription

ivermectin 2mg [url=https://stromectolvip.com/#]ivermectin over the counter canada[/url] how much is ivermectin

cost of ivermectin medicine stromectol Vip cost of stromectol medication

https://sildenafilvip.com/# Buy generic 100mg Viagra online

stromectol 3 mg price [url=http://stromectolvip.com/#]ivermectin cream 1%[/url] ivermectin generic name

Cheap generic Viagra sildenafil online Viagra generic over the counter

ivermectin 1 cream generic ivermectin buy online purchase stromectol online

http://stromectolvip.com/# ivermectin cream cost

https://stromectolvip.com/# ivermectin usa price

Cialis over the counter Cialis Vip Buy Cialis online

https://sildenafilvip.com/# Sildenafil Vip

Generic Cialis without a doctor prescription Generic Tadalafil 20mg price Cheap Cialis

Generic Cialis price Cialis Vip Tadalafil price

п»їcialis generic Cialis without a doctor prescription Cialis over the counter

stromectol south africa stromectol Vip ivermectin coronavirus

stromectol covid 19 [url=https://stromectolvip.online/#]stromectol Vip[/url] ivermectin 1 cream

order stromectol [url=https://stromectolvip.online/#]stromectol Vip[/url] ivermectin ebay

https://sildenafilvip.shop/# Sildenafil Vip

foreign online pharmacy [url=http://victopharm.com/#]express scripts mail order pharmacy[/url] foreign online pharmacy

generic liraglutide LiraglutideGlp1 buy liraglutide

is semaglutide or tirzepatide better rybelsus farmacia del ahorro rybelsus diet plan

how much weight can you lose on semaglutide in a month glp-1 semaglutide with insurance

benefits of rybelsus glp 1 pills semaglutide drugs

victoza delivery LiraglutideGlp1 victoza weight loss

buy drugs online Victo Pharm new pharmacy online

cheap liraglutide liraglutide online liraglutide

victoza delivery victoza weight loss liraglutide online

rybelsus rx glp-1 how many units is 1.7 mg of semaglutide

victoza buy liraglutide online buy victoza

Viagra online price best price for viagra 100mg – generic sildenafil

I don’t think the title of your article matches the content lol. Just kidding, mainly because I had some doubts after reading the article.

Order Viagra 50 mg online UroHealth Daily – generic sildenafil

Cialis without a doctor prescription buy cialis pill – Buy Tadalafil 20mg